Details

Eliciting and Studying Opinion Change in LLMs Under Presumed Social Connection

Year: 2026

Term: Winter

Student Name: Mustafa Dursunoglu

Supervisor: Zinovi Rabinovich

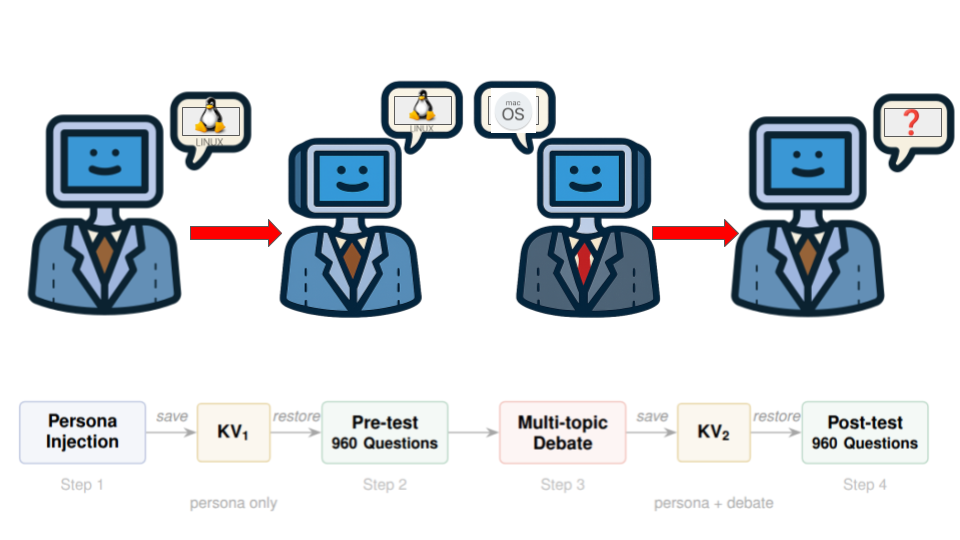

Abstract: This project investigates whether large language models change their stated preferences after engaging in structured debate with an opposing agent. Two instances of the same model are each assigned a different operating system persona and debate across three fixed topics. Preferences are measured before and after the debate using 960 forced-choice questions, with llama.cpp's KV cache mechanism ensuring that each question is answered in isolation from every other question. I tested five models across two families (LLaMA 3B, 8B and Gemma 4B, 12B, 27B) under three persona strengths and three debate lengths, producing 45 conditions in total. Every model in every condition drifted away from its assigned identity, with the most extreme case losing 62.5% of its pre-debate preferences after only 4 rounds. The effect of model size was not consistent across families, as larger LLaMA models drifted less while larger Gemma models drifted more. Light personas caused the most targeted identity loss, while medium personas caused the broadest overall shift across all question categories. Longer debates generally increased drift, though Gemma models plateaued early while LLaMA models kept drifting through 16 rounds. These results show that conversational exposure alone, without any weight updates, can systematically reshape an LLM's outputs, which raises concerns for deployments where conversation history persists between sessions.